Allies warn of cyber divide as US firms gatekeep powerful Mythos AI

Goldman Sachs is working closing with Mythos to protect itself, UK's AISI tested Mythos which excelled over other models, Bain & Co. was easily exposed by pentesters, Kraken suffered two insider security incidents, EU to abandon Chinese inverters, much more

Check out my latest CSO piece that looks at how two studies underscore that CISOs should prepare for wave after wave of patching, exploits, and autonomous attacks, because Claude Mythos Preview and its successors structurally alter cybersecurity.

Metacurity is the only daily cybersecurity briefing built for clarity, not agendas—no vendor spin, no echo chamber, just sharp, original aggregation and analysis of what actually matters to security leaders.

If you rely on Metacurity to cut through the noise on policy, industry shifts, and security research, consider supporting us with a paid subscription. Independent coverage like this only exists because readers decide it’s worth it.

Countries across Europe and key US allies are raising concerns that they are being excluded from early access to Anthropic’s most advanced cybersecurity AI system, a restriction that could reshape the balance of cyber defense capabilities among nations.

Anthropic’s so-called “Mythos” preview, developed under its Project Glasswing initiative, is being tightly controlled due to its ability to identify and exploit software vulnerabilities at scale, including previously unknown flaws. Access has been limited to a small group of primarily US-based technology companies and security partners, including firms such as Microsoft and Apple, according to multiple reports.

That restricted rollout is creating what analysts and officials describe as a growing divide between countries with early visibility into vulnerabilities and those forced to wait.

European officials said they have largely been left “in the dark” about the system’s capabilities and outputs, despite the potential impact on their own infrastructure. While some governments are in discussions with Anthropic, they have not been granted the same level of access as US partners, raising concerns about dependence on foreign companies for critical cybersecurity insights.

The issue is compounded by the fact that Mythos has not been broadly released, limiting the ability of European regulators to assert oversight or impose access requirements under existing digital governance frameworks.

In Canada, the restricted access is being characterized as the emergence of a new kind of cybersecurity concentration, in which a small group of companies—and by extension, their home countries—gain early knowledge of systemic vulnerabilities and control over when and how those flaws are disclosed or mitigated.

Such dynamics could give those inside the program a significant defensive advantage, allowing them to patch systems before vulnerabilities are widely known, while others remain exposed.

In Australia, officials and analysts have warned that the lack of access could translate directly into increased risk. Without the ability to use the system to identify weaknesses proactively, countries outside the initial cohort may face a delay in securing critical infrastructure against increasingly sophisticated cyber threats.

The concerns come amid expectations that similar AI capabilities will eventually proliferate more widely. However, experts note that even a temporary gap in access could prove consequential, particularly if adversaries develop or acquire comparable tools before excluded nations have had an opportunity to harden their systems. (Pieter Haeck and Sam Clark / Politico EU, Andrew Willis / The Globe and Mail, Isaac Francis / Grafa)

Related: Gizmodo, Wall Street Journal, Financial Times, Marcus on AI

Goldman Sachs’s chief executive, David Solomon, has said he is “hyper-aware” of the capabilities of Anthropic’s Mythos AI model and is working “closely” with the tech firm after it issued warnings about the cybersecurity risk it poses.

The US bank had been monitoring the rapid advances in artificial intelligence, including large language models (LLMs), as part of wider efforts to protect itself from hackers.

“Obviously, the LLMs are making rapid progress, and we’re hyper-aware of the enhanced capabilities of these new models with the help of the US government and the model publishers,” Solomon told analysts on an earnings call on Monday.

That included Anthropic, the company behind the Claude family of AI tools. Last week, it claimed that its latest model, Mythos, posed an unprecedented risk because of its ability to expose flaws in IT systems.

“AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities,” Anthropic said, “The fallout – for economies, public safety, and national security – could be severe.”

Solomon said: “We’re aware of Mythos and its capabilities … We have the model. We’re working closely with Anthropic and all of our security vendors to kind of harness frontier capabilities wherever it’s possible. And this will continue to be an important focus.

“We are very focused on supplementing our cyber and infrastructure resilience. And this is part of our ongoing capabilities that we have been investing in, and are accelerating our investment in.” (Kalyeena Makortoff and Dan Milmo / The Guardian)

Related: The International News, Business Insider, Bloomberg Law, The Cyber Express

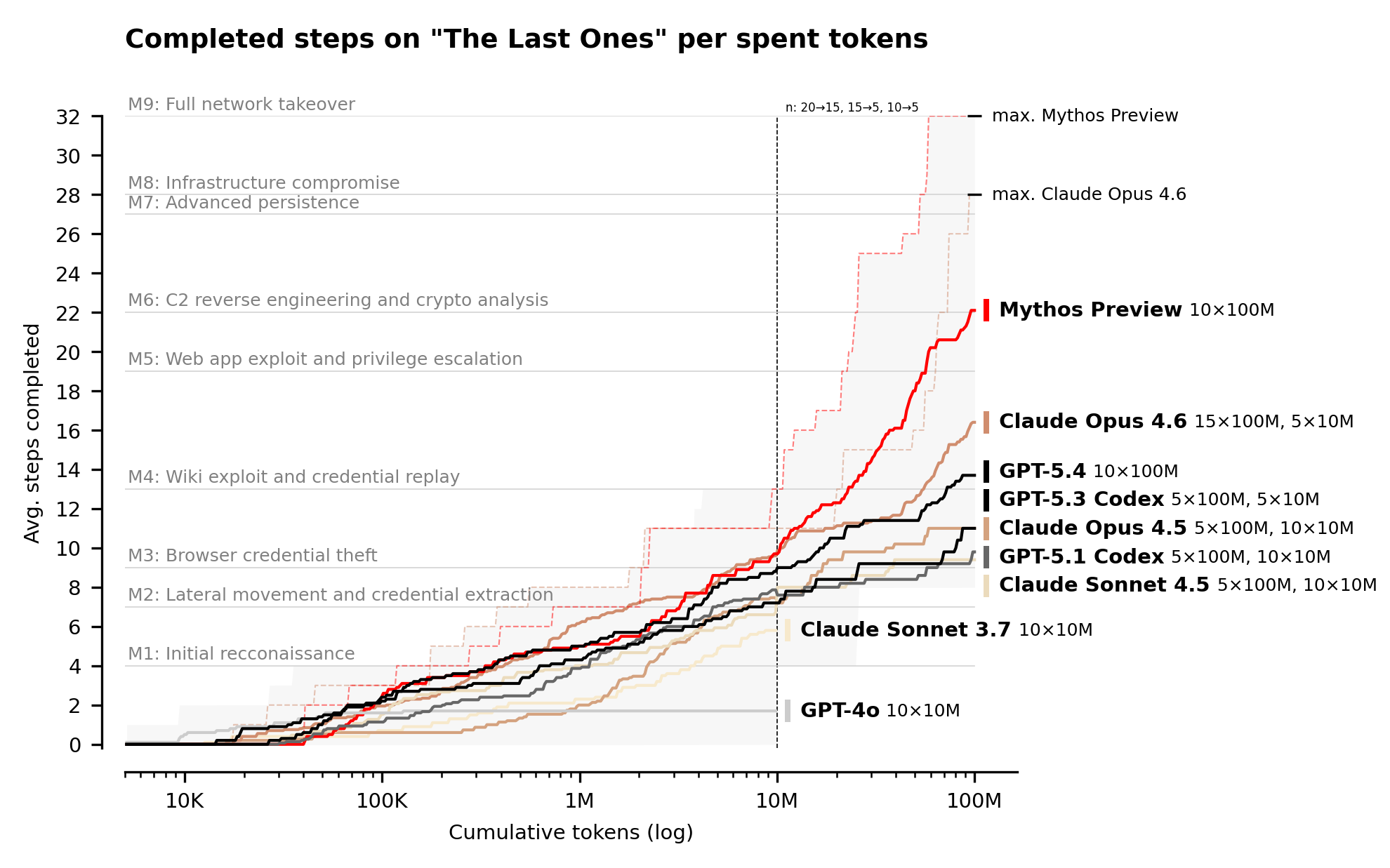

The UK's AI Security Institute (AISI) conducted evaluations of Anthropic’s Claude Mythos Preview (to assess its cybersecurity capabilities, showing that Mythos Preview represents a step up over previous frontier models in a landscape where cyber performance was already rapidly improving.

AISI noted that two years ago, the best available models could barely complete beginner-level cyber tasks. In controlled evaluations where Mythos Preview was explicitly directed and given network access to do so, AISI observed that it could execute multi-stage attacks on vulnerable networks and discover and exploit vulnerabilities autonomously – tasks that would take human professionals days of work.

Mythos beat the competition in a 32-step corporate network attack simulation spanning initial reconnaissance through to full network takeover, which AISI estimates to require humans 20 hours to complete. (AISI)

Related: CSO Online, CyberScoop, Decrypt, diginomica, The Cyber Express, Hacker News, r/singularity

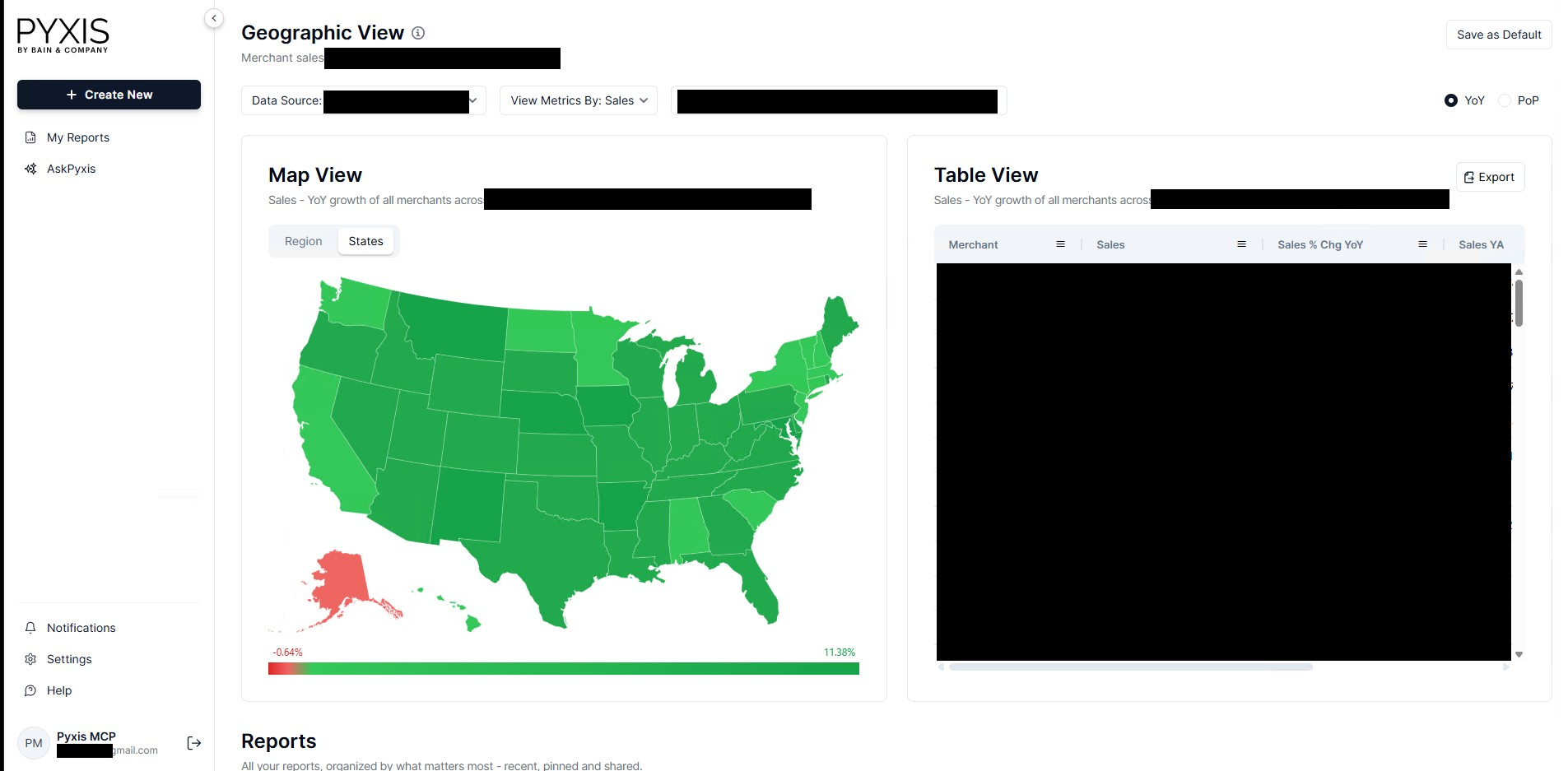

Pentesting company CodeWall gained access to one of Bain & Co’s internal AI tools, weeks after exposing cybersecurity flaws in a system at rival consultancy McKinsey, highlighting the risks as elite advisers push to adopt new technology.

CodeWall said it took just 18 minutes to make a breakthrough towards accessing Bain’s Pyxis platform, used by part of the consultancy’s private equity practice to help assess companies for due diligence and investment analysis.

The hacker said it had been able to view nearly 10,000 AI conversations held with Pyxis’s AI chatbot, which helps users analyze billions of consumer transactions collected on a database provided by a third-party supplier.

Those conversations included queries from staff at multiple Bain clients, CodeWall said, adding that examples included consumer food brands asking questions about their rivals.

CodeWall said its autonomous agent had been able to gain access using a username and password that had been written into publicly available web code.

The hacker says it focuses on companies that have published guidelines on how “ethical” hackers should probe their systems for cybersecurity flaws.

The hacker said it had discovered employee email addresses and security tokens, “meaning an attacker could impersonate any Bain employee” or create new Pyxis account logins. (Ellesheva Kissin / Financial Times)

Related: CodeWall

Crypto exchange Kraken disclosed two insider-related security incidents involving support staff access to limited client data, followed by an extortion attempt by a criminal group, according to a company statement and comments from its chief security officer.

The firm said no systems were breached and no client funds were placed at risk in either case. Both incidents involved inappropriate access to internal support tools rather than core trading infrastructure, and access was revoked once identified.

Kraken’s Chief Security Officer Nick Percoco said the company is facing demands from attackers who claim to possess videos showing internal systems with client data. The group threatened to release the material unless Kraken complied.

“Our systems were never breached; funds were never at risk; we will not pay these criminals,” Percoco said in a public statement, adding that the company will not negotiate with the actors involved.

Kraken said about 2,000 client accounts were potentially viewed across both incidents, representing roughly 0.02% of its global user base. Affected users were notified, and the company said the exposed information was limited to support data rather than sensitive financial controls. (Micah Zimmerman / Bitcoin Magazine)

Related: CoinDesk, CoinPaper, Blockonomi, Unchained Crypto, PYMNTS, SQMagazine

At a top-level meeting of her 26 department chiefs in March, European Commission President Ursula von der Leyen quietly approved a plan to stop EU funds from going to clean technology projects containing Chinese inverters.

Inverters are the essential power electronics at the heart of solar and wind systems. Industry groups estimate that Chinese companies led by Huawei Technologies control more than 220 gigawatts of Europe’s installed solar capacity via the devices.

The commission wants to prevent EU money from flowing to such projects and curb research cooperation under its Horizon programme, where Chinese inverters are involved, according to four people familiar with the plan.

This ban would fit with the bloc’s ambition to support local manufacturers, who complain they are being crushed by Chinese competition. It dovetails with heightened security fears that China, seen in large parts of Europe as a geopolitical rival, could cut power to the grid should relations worsen. (Finbarr Bermingham / South China Morning Post)

Matthew Lane, who pleaded guilty to charges of hacking into the network of PowerSchool, a software provider for school systems, and stealing the personal information of more than 60 million students and 10 million teachers, said he started his criminal career as a child on Roblox, the colossal online gaming platform popular with children and teens.

Roblox noted that cybercrime is "an industry-wide challenge" and said that the gaming platform routinely reports cyber-enabled crime to law enforcement. Roblox also said it is using "cutting-edge anti-cheat systems" to stop cheating on its platform.

Yesterday, Roblox announced that, starting in June, it will offer age-checked accounts for younger users that limit what games they can play, and add "more closely align content access, communication settings, and parental controls with a user's age." (Mike Levine / ABC News)

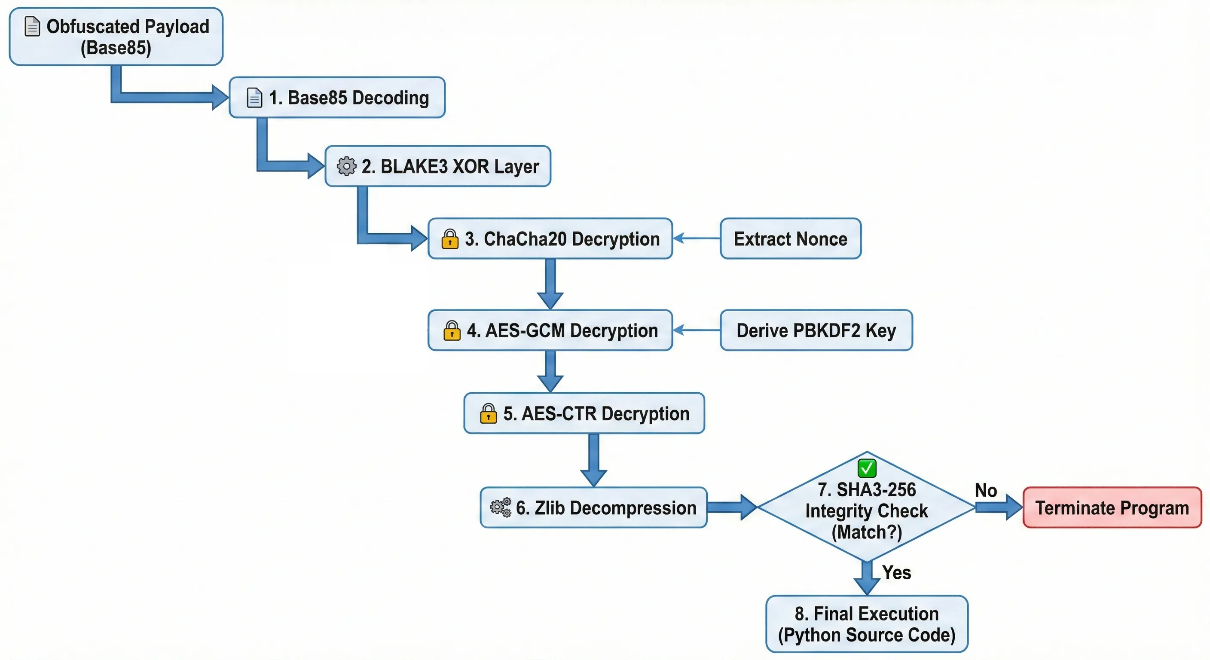

Researchers at InfoGuard report that they found a new Python-based backdoor, dubbed ViperTunnel, hiding in the networks of UK and US businesses.

The malware has, reportedly, been in development since late 2023, and is often deployed as a follow-up to FAKEUPDATES (SocGholish) infections. However, it is currently being used to maintain long-term access to systems before selling that entry to major ransomware groups like RansomHub.

Evidence suggests ViperTunnel is the work of UNC2165, a group closely linked to the notorious EvilCorp. It is often used alongside ShadowCoil, a credential-stealing tool that targets Chrome, Firefox, and Edge.

The malware has improved a lot over time, as in December 2023, it was full of typos like “deamon” and “verifing,” but by September 2024, they were using PyOBFUSCATE to hide their work. By late 2025, it had become a professional tool with a modular design using three parts: Wire, Relay, and Commander.

The most concerning find was a new check for TracerPid in Linux system files. Although these current attacks target Windows, this finding has perplexed researchers, as they suspect that hackers could be preparing a version for Linux servers to create a cross-platform framework. (Deeba Ahmed / HackRead)

Related: InfoGuard, GBHackers, CyberSecurity News, Cyber Press

Websites that engage in “back button hijacking” might soon appear less prominently in Google Search results as part of a new spam policy.

Back button hijacking occurs when a site prevents users from “using their back button to immediately get back to the page they came from.” Users are instead sent to “pages they never visited before, be presented with unsolicited recommendations or ads, or are otherwise just prevented from normally browsing the web.”

As Google notes, this breaks the “fundamental expectation” of how a browser’s back button should work. Besides breaking browser functionality, it “breaks the expected user journey” and “results in user frustration.”

Google Search is now classifying back button hijacking as a violation of its “malicious practices” spam policy and is subject to manual spam actions or automated demotions. (Abner Li / 9to5Google)

Related: Google, Hacker News

According to technical documentation and proof-of-concept exploits published on the Vulhub repository, there is a critical vulnerability in ShowDoc, a widely used online document-sharing platform designed for IT teams.

Tracked as CNVD-2020-26585, this severe security flaw allows unauthenticated remote code execution (RCE) on compromised servers.

The vulnerability poses a significant risk to organizations relying on outdated versions of the software for internal collaboration, as it allows attackers to completely take over the host system.

The vulnerability is rooted in an unrestricted file upload mechanism. Because this exploit requires zero prior authentication, any threat actor with network access to a vulnerable ShowDoc instance can launch an attack.

ShowDoc versions before 2.8.7 fail to properly validate and sanitize files uploaded by users. Because the application does not require authentication to access the upload endpoint, remote threat actors can easily bypass security filters and upload malicious files directly to the server. (Divya / GBHackers)

Related: GitHub, CyberPress, Security Affairs

Best Thing of the Day: Let's Hold AI to Account at the Outset

The ex-girlfriend of a man she said stalked her using a tool he developed on OpenAI is suing the AI giant, alleging the company’s technology enabled the acceleration of her harassment.

Worst Thing of the Day: First, They Came for the Fridges

The UK Coalition on Secure Technology, the cross-party campaign raising awareness of technological threats from hostile states, has warned that the components that allow fridges to connect to the internet are often made in China.

Closing Thought