Instructure forms deal with ShinyHunters who promise to destroy stolen Canvas data

US intel agencies want to evaluate AI model while Commerce seems to back away, OpenAI launches cybersecurity model Daybreak, Euro countries sell spyware to rights violators, Binance claims AI system saved $10b in scam losses, Shai-Hulud supply-chain campaign compromised npm and PyPi packages, more

Metacurity is the only daily cybersecurity briefing built for clarity, not agendas—no vendor spin, no echo chamber, just sharp, original aggregation and analysis of what actually matters to security leaders.

If you rely on Metacurity to cut through the noise on policy, industry shifts, and security research, consider supporting us with a paid subscription. Independent coverage like this only exists because readers decide it’s worth it.

Instructure, the maker of Canvas, the software used by thousands of schools and universities around the world, said it had reached a deal with the hackers that recently breached its systems for the return of stolen data and the destruction of any copies.

ShinyHunters, a hacking group, had claimed responsibility for the attack on Instructure, the Salt Lake City-based company that provides Canvas to about half of all colleges and universities in North America.

The hackers said they had accessed the data of more than 275 million users at nearly 9,000 schools worldwide, including private conversations between students and teachers as well as personal identifying information such as names and email addresses. Canvas was shut down for hours after the cyberattack on Thursday.

The agreement, Instructure said in a statement, involved the return of the stolen data and confirmation that the data had been destroyed at the hackers’ end. Instructure added that it had been informed that none of its customers would face extortion as a result of the theft.

“While there is never complete certainty when dealing with cybercriminals, we believe it was important to take every step within our control to give customers additional peace of mind, to the extent possible,” the company said.

Instructure did not say what it had given the hackers in exchange for the return of the data. (Qasim Nauman / New York Times)

Related: Instructure, Information Age, nltimes.nl, Mashable, r/cybersecurity, Inside Higher Ed, The Chronicle, Instructure, NL Times, RNZ, Cyber Daily

US intelligence agencies are seeking a larger role in evaluating advanced artificial intelligence systems amid growing fears that frontier AI models could be exploited for cyberattacks, espionage, and other national security threats.

At the same time, the Commerce Department’s Center for AI Standards and Innovation (CAISI) has been carrying out voluntary safety evaluations of major AI systems developed by companies including Google, Microsoft, OpenAI, Anthropic, and xAI.

The debate reflects a broader divide within the Trump administration and the technology industry over how aggressively AI should be regulated. Some officials and Silicon Valley allies favor a lighter-touch approach, arguing that excessive oversight could slow American innovation and weaken the United States in its competition with China.

Concerns escalated following discussions surrounding Anthropic’s powerful “Mythos” model and growing worries that increasingly capable AI systems could expose serious cybersecurity vulnerabilities. The White House is reportedly considering an executive order that would expand the Office of the Director of National Intelligence’s authority over AI model evaluations, although the administration’s overall messaging on AI regulation has remained inconsistent.

Separately, the US Commerce Department removed details from its website about its agreement with Google, xAI, and Microsoft to test their artificial intelligence models for security vulnerabilities.

The Commerce Department announced on May 5 that the companies would hand over new AI models before they deploy them to the public, allowing government scientists to test them for security flaws. (Cat Zakrzewski, Ellen Nakashima, and Nitasha Tiku / Washington Post and Courtney Rozen / Reuters)

Related: Associated Press

OpenAI has introduced Daybreak, a new cybersecurity initiative built around frontier AI models, Codex, and a partner network of security companies.

The program is aimed at developers, enterprise security teams, researchers, and government-linked defenders who need to find, validate, and patch software vulnerabilities earlier in the development cycle.

Daybreak positions OpenAI’s models as part of a defensive security workflow, not just a coding assistant. It brings secure code review, threat modeling, patch validation, dependency risk analysis, detection support, and remediation guidance into Codex Security. OpenAI says the goal is to help teams identify high-impact issues, generate and test patches inside repositories, and send audit-ready evidence back into existing security systems.

The rollout is tied to OpenAI’s Trusted Access for Cyber framework. Standard GPT-5.5 remains the default model for general work, while GPT-5.5 with Trusted Access is meant for verified defenders handling secure code review, vulnerability triage, malware analysis, detection engineering, and patch validation. GPT-5.5-Cyber is being positioned as a more permissive limited-preview model for specialized authorized workflows, including red teaming, penetration testing, and controlled validation.

Daybreak positions OpenAI’s models as part of a defensive security workflow, not just a coding assistant. It brings secure code review, threat modeling, patch validation, dependency risk analysis, detection support, and remediation guidance into Codex Security. OpenAI says the goal is to help teams identify high-impact issues, generate and test patches inside repositories, and send audit-ready evidence back into existing security systems.

The rollout is tied to OpenAI’s Trusted Access for Cyber framework. Standard GPT-5.5 remains the default model for general work, while GPT-5.5 with Trusted Access is meant for verified defenders handling secure code review, vulnerability triage, malware analysis, detection engineering, and patch validation. GPT-5.5-Cyber is being positioned as a more permissive limited-preview model for specialized authorized workflows, including red teaming, penetration testing, and controlled validation. (Alexey Shabanov / AI News)

Related: OpenAI, Decrypt, MacRumors, The Verge, r/singularity

Human Rights Watch reports that European companies have sold controversial surveillance technologies to countries known for violating human rights.

The group's findings indicate that European Union regulations introduced in 2021 to rein in exports of the technology are not being properly enforced.

The New York-based nonprofit obtained trade data and found that at least six EU member states — including Bulgaria, the Czech Republic, Denmark, Finland, and Poland — had sold surveillance technologies to more than two dozen countries with documented histories of human rights violations, including repression of activists and journalists.

The data analyzed by Human Rights Watch did not specify the names of the companies that manufactured the surveillance technology or reveal the specific end users in the destination countries. The group obtained the export records through freedom of information requests, which provide only a partial picture of sales across the bloc. Several European countries, it said, were known to export surveillance equipment — including France, Germany, Greece, Italy, and Spain — and declined to share their data.

According to the report, Bulgaria exported a variety of surveillance technologies to authorities in more than 20 countries, including Azerbaijan, the United Arab Emirates, Vietnam, Uganda, and Jordan. These countries receive low freedom scores according to nonprofit Freedom House, which charts political rights and civil liberties across the world. (Ryan Gallagher / Bloomberg)

Related: Human Rights Watch

Binance claims its AI security system has collectively helped save millions of users $10.53 billion in potential losses from scams between Q1 2025 and Q2 2025.

According to a blog on Monday, the world’s largest crypto exchange has rolled out about two dozen AI-powered security features to help protect users from crypto scams.

"Computer vision is used to detect fake payment proofs, while real-time language analysis helps surface scam patterns in P2P transactions. AI-driven decisioning now powers 57% of fraud controls, contributing to a 60–70% reduction in card fraud rates compared to industry benchmarks," Binance wrote.

"On the identity verification front, Binance’s KYC systems continue to evolve to counter increasingly sophisticated deepfakes and synthetic identities, delivering up to 100x gains in operational efficiency compared to traditional manual processes without AI," the exchange continued.

In Q1 2026 alone, Binance claims to have safeguarded $1.98 billion in funds protected from 22.9 million scam and phishing attempts. The exchange has also helped recover $12.8 million worth of funds from 48,000 cases. (Daniel Kuhn / The Block)

Related: Binance, The Crypto Times, CoinCentral, CoinMarketCap, Cryptopolitan, Decrypt

Hundreds of packages across npm and PyPI have been compromised in a new Shai-Hulud supply-chain campaign delivering credential-stealing malware targeting developers.

The attacker hijacked valid OpenID Connect (OIDC) tokens to publish malicious package versions with verifiable provenance attestation (SLSA Build Level 3)

Attributed to the TeamPCP threat group, the attack started with compromising dozens of TanStack and Mistral AI packages but quickly extended to other popular projects, like Guardrails AI, UiPath, and OpenSearch.

The Shai-Hulud campaign emerged last September and had multiple iterations, some of them exposing hundreds of thousands of developer secrets in automatically generated GitHub repositories. Among more recently compromised projects are the Bitwarden CLI package and the official SAP packages.

The latest attack wave occurred yesterday with the threat actor publishing multiple malicious packages in the TanStack namespaces on the Node Package Manager (npm), and then spreading to other projects using stolen CI/CD credentials.

Hundreds of packages across npm and PyPI have been compromised in a new Shai-Hulud supply-chain campaign delivering credential-stealing malware targeting developers.

The attacker hijacked valid OpenID Connect (OIDC) tokens to publish malicious package versions with verifiable provenance attestation (SLSA Build Level 3)

Attributed to the TeamPCP threat group, the attack started with compromising dozens of TanStack and Mistral AI packages but quickly extended to other popular projects, like Guardrails AI, UiPath, and OpenSearch.

The latest attack wave occurred yesterday with the threat actor publishing multiple malicious packages in the TanStack namespaces on the Node Package Manager (npm), and then spreading to other projects using stolen CI/CD credentials. (Bill Toulas / Bleeping Computer)

Related: Endor Labs, Aikido, TanStack

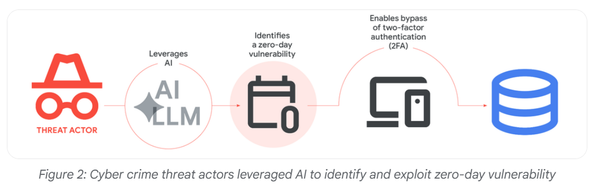

Commercial large language models (LLMs) were used as part of a cyberattack that targeted a municipal water and drainage utility provider in Mexico, cybersecurity researchers at Dragos have warned.

A “significant compromise” of the water infrastructure providers’ IT environment escalated into an attempted attack against the organization’s operational infrastructure (OT), said a Dragos report, published on May 6.

The research suggested that attackers used Anthropic’s Claude AI and OpenAI’s GPT models to aid with planning and conducting the campaign.

The cyber-attack against the water facility in the Monterrey metropolitan area of Mexico took place between December 2025 and February 2026.

Dragos analyzed 350 artifacts associated with the attack, most of which were AI-generated malicious scripts used as offensive tooling during the intrusions. They found that the adversary leveraged commercially available tools to aid with the campaign.

Attribution remains unclear, with no named threat actor publicly identified. (Danny Palmer / Infosecurity Magazine)

Related: Dragos, Dark Reading, Industrial Cyber

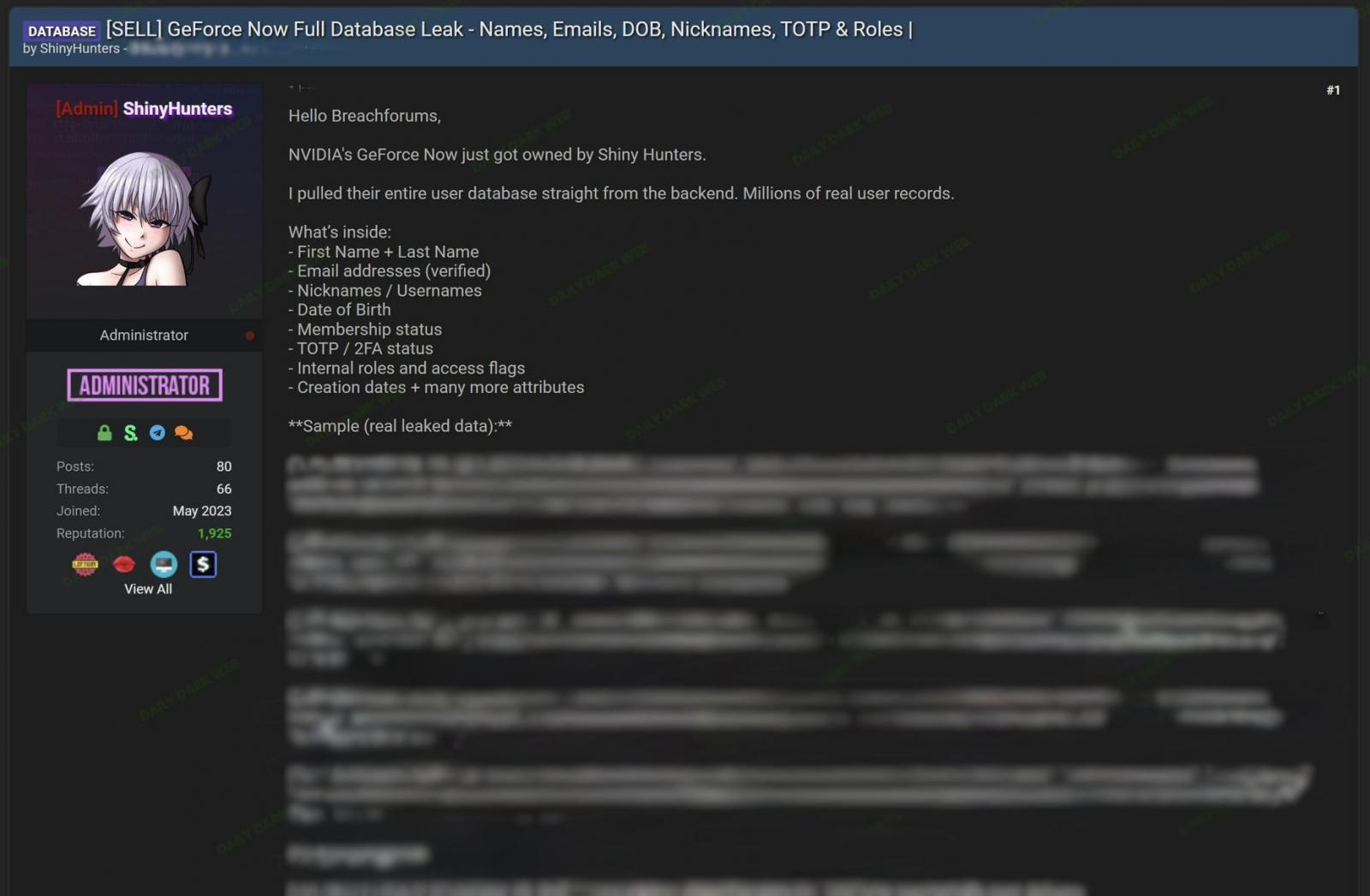

NVIDIA confirmed last week that GeForce NOW user information has been exposed in a data breach.

The gaming and hardware giant has clarified that the impact is limited to Armenia and was caused by a compromise of the infrastructure operated by a regional partner.

The company added that the incident did not impact its own network.

“Our investigation found no impact on NVIDIA-operated services. The issue is limited to systems run by a third-party GeForce NOW Alliance partner based in Armenia. We are working closely with the partner to support their investigation and resolution. Impacted users will be notified by GFN.am,” the company said.

The statement comes in response to a post last week on a hacker forum from a threat actor using the ShinyHunters nickname, claiming to have breached the GeForce NOW service and stolen millions of user records.

However, the ShinyHunters actor who published the breach on the hacker forum is believed to be an imposter.

According to the threat actor, the stolen information includes full names, email addresses, usernames, dates of birth, membership status, and 2FA/TOTP status.

The threat actor also posted samples of the stolen data and offered the full database for $100,000 paid in Bitcoin or Monero. (Bill Toulas / Bleeping Computer)

The new ransomware-as-a-service organization called The Gentlemen suffered a data breach that first surfaced on May 4, in a post to cybercrime forum Breached with the subject line "The Gentlemen - hacked data for sale," requested $10,000, payable in bitcoin, "for the full data," with samples available on request.

Whether or not someone paid isn't clear, but on Friday, the same user listed a link to the file-sharing site MediaFire for downloading the stolen data for free.

"What makes the material especially interesting is that it appears to show the operational side of a modern ransomware ecosystem in real time, including infrastructure management, target selection, backend development, and OPSEC practices," said Milivoj Rajić, head of threat intelligence at cybersecurity firm DynaRisk, who's been poring over the leaked data.

The compromised communications included 8,200 lines of text from an internal chat tool, plus images of infected systems, and message timestamps largely corresponding to people who work Moscow hours, he said. (Matthew J. Schwartz / BankInfoSecurity)

Related: Cybersecurity Insiders

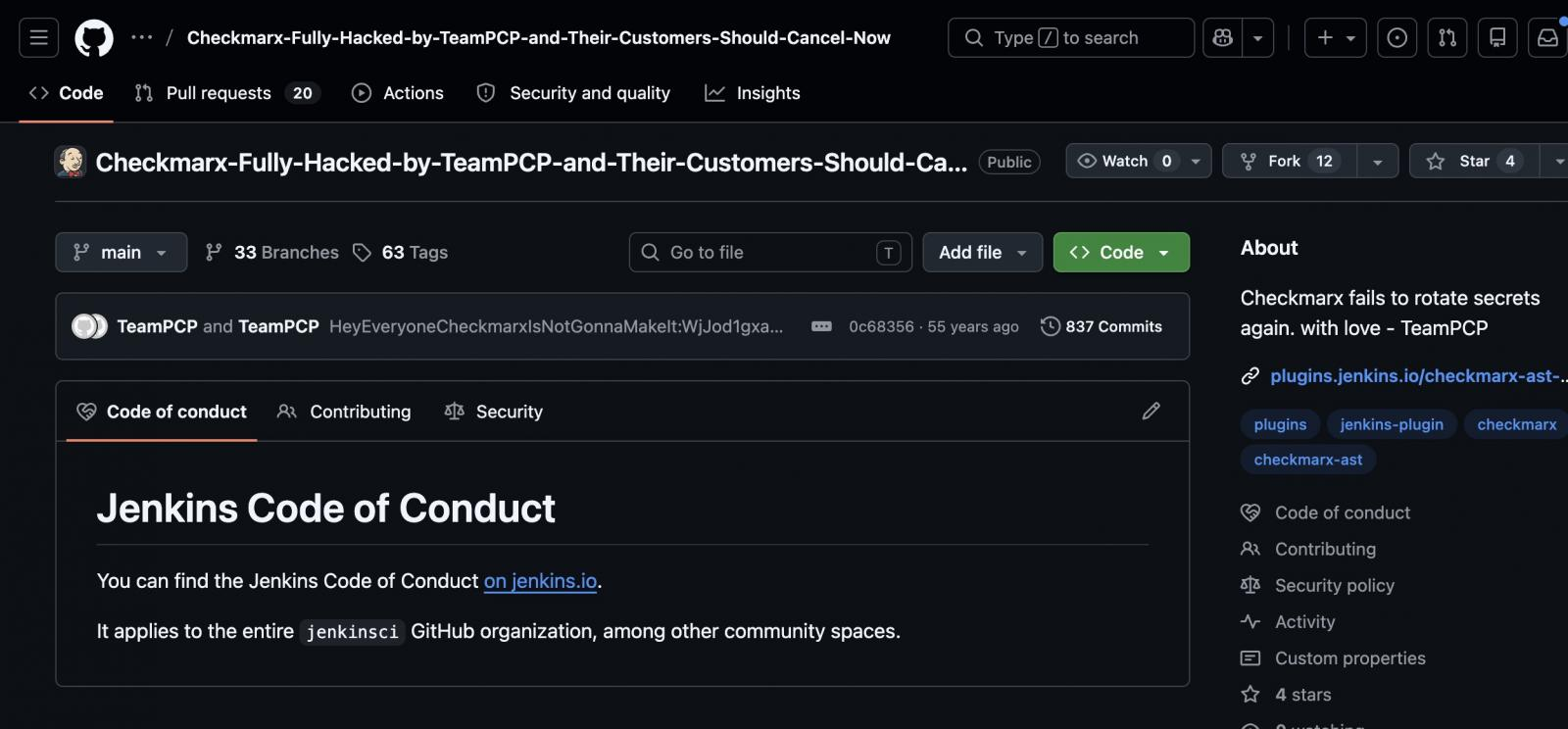

Checkmarx warned over the weekend that a rogue version of its Jenkins Application Security Testing (AST) plugin had been published on the Jenkins Marketplace.

The compromise was claimed by the TeamPCP hacker group, which initiated a spree of supply-chain attacks that included the Shai-Hulud campaigns on npm and the Trivy vulnerability scanner breach, resulting in the delivery of credential-stealing malware.

The Checkmarx AST plugin on the Jenkins Marketplace integrates security scanning into automated pipelines.

“We are aware that a modified version of the Checkmarx Jenkins AST plugin was published to the Jenkins Marketplace. We are in the process of publishing a new version of this plug-in,” Checkmarx alerted in the update.

This is the third incident in a series of supply-chain attacks the application security testing firm has suffered since late March.

According to offensive security engineer Adnand Khan, TeamPCP gained access to Checkmarx's GitHub repositories and backdoored the Jenkins AST plugin to deliver credential-stealing malware. (Bill Toulas / Bleeping Computer)

Related: Checkmarx, The Register

Apple released iOS 26.5 and iPadOS 26.5, introducing end-to-end encryption for RCS messages exchanged between iPhone and Android users.

E2EE for RCS requires both participants in the conversation to have a carrier that supports the feature, and carriers will be rolling out support over time. Encrypted RCS messages have a small lock symbol, and match the end-to-end encryption protections of iMessage. (Juli Clover / MacRumors)

Related: The Mac Observer, Thurrott.com, MacDailyNews, The Mac Observer, Macworld, AppleInsider, iPhone in Canada Blog, Ars Technica, Computerworld Security, Apple Post

Prime Minister Sanae Takaichi called for a cabinet-level effort to find cybersecurity weaknesses in Japan's infrastructure and reinforce them against the risk of such powerful new artificial intelligence tools as Anthropic's Mythos.

At a ministerial meeting, Takaichi directed officials, including Hisashi Matsumoto, minister in charge of cybersecurity, to lead a government-wide response.

The efforts are driven by concerns related to Claude Mythos, an AI model announced in April by US startup Anthropic that can discover and exploit software security flaws far faster than earlier software. (SATOSHI TEZUKA / Nikkei Asia)

Related: The Register, JiJi Press, The Chosun Daily, Kyodo News

The European Union is working on new rules to protect children from the addictive designs of social media such as TikTok, Meta, and X, EU Commission President Ursula von der Leyen said.

"Sleep deprivation, depression, anxiety, self-harm, addictive behaviour, cyberbullying, grooming, exploitation, suicide. Risks are multiplying fast," von der Leyen said in a speech in Copenhagen.

Von der Leyen said the Commission would specifically target "addictive and harmful design practices" in its Digital Fairness Act (DFA), due to be proposed towards the end of the year.

The DFA would also set strict limits on the use of artificial intelligence in social media, she said, while she advocated for a minimum age for social media access.

Von der Leyen said the EU must consider setting a minimum age for access to social media, adding that the Commission might make a proposal this summer on the issue following recommendations from a panel of experts. (Inti Landauro / Reuters)

Related: Euronews, CNBC, Euractiv

Australian Transaction Reports and Analysis Centre (Austrac) warned of a heightened threat of money laundering linked to artificial intelligence that has been used by crooks to scale up activities, automate processes, and create fake documents.

“Criminals are increasingly using AI as a part of their money laundering toolkit — fabricating identities, forging documents and rapidly disguising the proceeds of scams,” said Brendan Thomas, Chief Executive Officer of Austrac.

“In some cases, technology is automating what used to be manual laundering techniques, raising the sophistication and scale of financial crime,” he said as the agency released annual updates to its risk guidelines.

Austrac, which acts as both a regulator and financial intelligence unit, issued the warning as it gave new risk assessments for money laundering, terrorism, and illegal transactions connected to Iran and North Korea.

It said Australia’s open, trade-integrated economy and reliance on cross-border financial and commercial systems raise exposure to the risks. (Richard Henderson / Bloomberg)

Related: Austrac, Tech in Asia

Netflix was sued by Texas Attorney General Ken Paxton, who accused the streaming company of spying on children and other consumers by collecting their data without consent, and designing its platform to be addictive.

Texas said that for years, Netflix has falsely represented to consumers that it did not collect or share user data, when it actually tracked and sold viewers' habits and preferences to commercial data brokers and advertising technology companies, making billions of dollars a year.

The Los Gatos, California-based company was also accused of quietly using "dark patterns" to keep users watching, including an autoplay feature that starts a new show when a different show ends. (Jonathan Stempel / Reuters)

Related: Reuters, BBC, Politico, The Verge, NBC News, CBS News, Chron, The Record, Forbes, Variety, CNET, Engadget, The Guardian, The Next Web, Texas Attorney General, Silicon UK, Variety, The Verge, MediaNama, Digit, Tech Times, Macau Business, Attorney General of Texas, Tech in Asia, NZ Herald, MediaPost, Courthouse News Service, Media Play News, Forbes, CNBC, The Independent, Deadline

Frame Security, a Tel Aviv, Israel-based human security and security awareness company, raised $50M in funding.

Backers included Index Ventures, Team8, and Picture Capital, with participation from Assaf Rappaport and Elad Gil. (CTech)

Related: FinSMEs, Globes, Fortune, FinTech Global, Security Week, Silicon Angle, MSSP Alert, Ventureburn, Ynet News, CityBiz, Fortune

Best Thing of the Day: It's Not Nice to Illegally Collect and Sell Californians' Data

California Attorney General Rob Bonta announced a $12.75 million settlement agreement with General Motors (GM) over allegations that the company violated the California Consumer Privacy Act (CCPA).

Bonus Best Thing of the Day: Grok Gets Its Due

Elon Musk’s artificial-intelligence model, Grok, lags far behind its fast-growing competitors with downloads of Grok falling to about 8.3 million in April, from a high of more than 20 million in January, according to analysis firm AppMagic.

Worst Thing of the Day: No Rest for the Weary

New York City’s public school system was contending with two distinct cybersecurity incidents simultaneously, one tied to the nationwide Canvas breach and another involving malware discovered on computers at a Manhattan campus.