AI bug hunters expose new weak point in Apple’s locked-down macOS

Shai-Hulud attack campaign hit two OpenAI employees, Hackers unwisely targeted Amnesty International's Security Lab chief, US and China to discuss AI guardrails, Anthropic warns of CCP AI dominance, DPRK's APT 37 is now posing as cops, MSFT warns of severe XSS flaw for Outlook web users, much more

Metacurity is the only daily cybersecurity briefing built for clarity, not agendas—no vendor spin, no echo chamber, just sharp, original aggregation and analysis of what actually matters to security leaders.

If you rely on Metacurity to cut through the noise on policy, industry shifts, and security research, consider supporting us with a paid subscription. Independent coverage like this only exists because readers decide it’s worth it.

Security researchers say they have discovered a new way of circumventing Apple’s state-of-the-art security technology, using techniques they discovered while testing an early version of Anthropic’s Mythos AI software in April.

The researchers with Calif, a Palo Alto-based security research company, say the software they wrote links together two bugs and a handful of techniques to corrupt the Mac’s memory and then gain access to parts of the device that should be inaccessible.

It is what’s known as a privilege escalation exploit, and if it were chained together with other attacks, it could be used by a hacker to seize control of the computer.

The technique is noteworthy because Apple has put so much effort into locking down MacOS, said Michał Zalewski, a security researcher who formerly worked at Google and who reviewed the Calif research but wasn’t involved in the testing.

Apple, which is deploying and testing frontier AI models to test and patch vulnerabilities, is reviewing the Calif report to validate its findings. “Security is our top priority, and we take reports of potential vulnerabilities very seriously,” a company spokeswoman said.

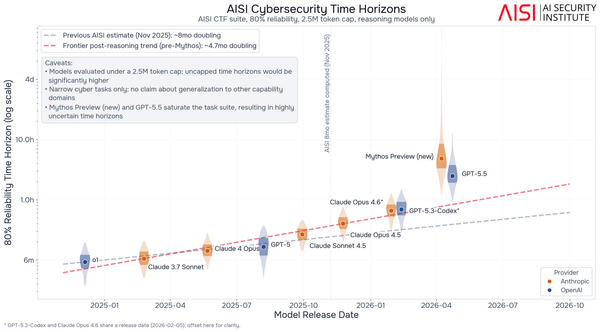

The bug-finding capabilities of the latest AI models from companies such as Anthropic and OpenAI have improved enough in recent months that many cybersecurity experts are now warning of a Bugmageddon, an unprecedented rash of security vulnerability discoveries that could cause headaches for the technology staffers who must patch them, and also represent an unprecedented cybersecurity risk. (Robert McMillan / Wall Street Journal)

Related: Calif, Mashable, 9to5Mac, The Mac Observer, Decrypt, MacRumors, iPhone in Canada, iClarified, PYMNTS, Cyber Security News, AppleInsider, WinBuzzer, Sherwood News, Silicon UK, MacDailyNews, Daring Fireball, Hacker News, Lobsters, MacRumors Forums

OpenAI confirmed that two of its employees had their devices impacted by a new Shai-Hulud supply-chain attack affecting several open-source projects.

However, after an investigation, the company said in a blog post that it found “no evidence that OpenAI user data was accessed, that our production systems or intellectual property were compromised, or that our software was altered.”

OpenAI said that an earlier attack compromised employees’ devices on TanStack, a popular open-source library that helps developers build web apps.

On Monday, TanStack disclosed the attack and published a postmortem, saying hackers published 84 malicious versions of its software during a six-minute window. The project said a researcher detected the attack within 20 minutes. The malicious TanStack versions included malware that was designed to steal credentials from computers on which the software was installed and to self-propagate to spread to other systems.

OpenAI said it had seen unauthorized access and theft of credentials “in a limited subset of internal source code repositories to which the two impacted employees had access.”

According to the AI giant, “only limited credential material” was taken from the affected code repositories. As a precaution, given that the affected repositories contained digital certificates used to sign OpenAI’s products, the company said it’s rotating the certificates “as a precaution,” which will require macOS users to update the app.

“We have found no evidence of compromise or risk to existing software installations,” the company wrote. (Lorenzo Franceschi-Bicchierai / TechCrunch)

Related: Bleeping Computer, OpenAI, AppleInsider, 9to5Mac, The Next Web, Reuters

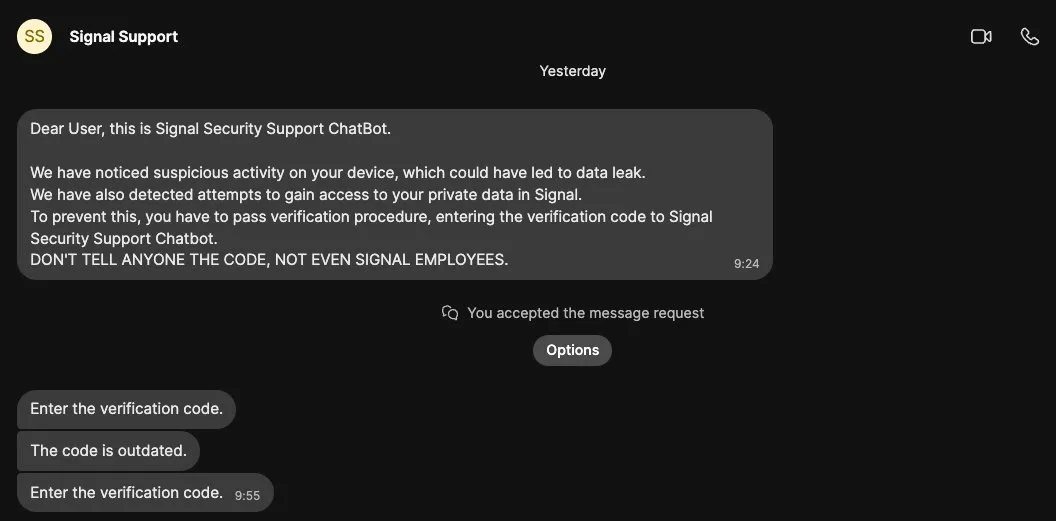

Early this year, malicious actors targeted security researcher Donncha Ó Cearbhaill, head of Amnesty International’s Security Lab, with an“unwise” attempt at hacking his Signal account.

He used the attempted hack as a good opportunity to jump into an unexpected investigation and discovered it was likely part of a wider hacking campaign targeting a large group of Signal users. The hackers’ strategies were to impersonate Signal, warn of bogus security threats, and try to trick targets into giving the hackers access to their accounts by linking them to a device controlled by the hackers.

Those techniques were exactly the same as those seen in a wider campaign that the US cybersecurity agency CISA, the United Kingdom's cybersecurity agency, and Dutch intelligence have all warned about and blamed on Russian government spies. Signal, too, has warned of phishing attacks targeting its users. German news magazine Der Spiegel found that the Russian hackers were able to compromise several people inside the country, including high-profile politicians.

Ó Cearbhaill said in a series of posts on X that he was able to figure out that he was one of more than 13,500 targets. He declined to reveal exactly how he investigated the hacking attempt and campaign to avoid revealing his hand to the hackers, but shared a few details about what he learned.

The researcher said he was able to identify the system the hackers were using, which is called “ApocalypseZ,” which automates the attack, allowing the hackers to target many people at the same time in bulk with limited human oversight.

Ó Cearbhaill said that if Signal users are worried about getting targeted with this type of attack, they should turn on Registration Lock, a feature that lets users set a PIN for their account that prevents others from registering their phone number on a different device. (Lorenzo Franceschi-Bicchierai / TechCrunch)

In conjunction with the Trump-Xi summit, the United States and China will discuss guardrails on artificial intelligence, including establishing a protocol for keeping powerful AI models out of the hands of nonstate actors, Treasury Secretary Scott Bessent said.

Bessent, who was speaking from Beijing in an interview with CNBC, did not give more details, including when these discussions would take place. But Xi Jinping, China’s leader, and President Trump had been expected to discuss AI during their summit in the Chinese capital.

If these talks happen, it would be the first time the two countries formally take up the issue during Trump’s second term. The capabilities and usage of AI have grown rapidly, and so have concerns that this technology could be weaponized by hackers and terrorists, or spiral out of human control.

“The two AIsuperpowers are going to start talking,” Bessent said. “We’re going to set up a protocol in terms of, how do we go forward with best practices for AI to make sure nonstate actors don’t get ahold of these models.”

Still, Bessent made clear that the fierce competition between the United States and China for supremacy in AI — which has been a major hurdle to cooperation on safety — remained front of mind for US policymakers. Officials and experts in both countries have argued that they cannot slow technological development and risk losing out to their rivals. (Vivian Wang / New York Times)

Related: CNBC, Reuters, Carnegie Endowment for International Peace, NDTV Profit

Timed to coincide with the Trump-Xi summit, AI giant Anthropic released a policy paper arguing that it’s essential the US and its allies stay ahead of authoritarian governments like the Chinese Communist Party, or CCP.

Anthropic says AI will soon become powerful enough to be used to repress citizens on an unprecedented scale, and even to alter the balance of power among nations. And since AI is advancing more quickly by the day, Anthropic argues that there is only a limited period of time to set the conditions of the competition—and determine whether and how those threats materialize.

The company says the most important ingredient for developing AI is access to the computer chips on which the models are trained (or “compute”). Since the most capable chips are developed by American companies, the US government currently limits China’s supply by enforcing tight export controls on them.

Anthropic says recent history suggests these controls have been incredibly successful. In fact, AI labs in China have only built models close in intelligence to America’s because of their talent, their knack for exploiting loopholes around these export controls, and their large-scale distillation attacks that illicitly extract the innovations of American companies. (Anthropic)

Related: Sinocism, The Information, Times of India, Nikkei Asia, Startup Fortune, PaymentSecurity.io, Interesting Engineering, The Decoder

Researchers at the South Korean infosec company Genians say that APT 37, a North Korean hacking group linked to the country's military intelligence agency, has posed as police investigators, defense officials, and North Korea experts in spear phishing attacks targeting South Korean security and policy figures.

Genians said it detected cyberattacks suspected of being linked to APT37, a North Korea-backed hacking group associated with the Reconnaissance General Bureau.

The latest attacks targeted people working in defense, national security, and North Korea-related fields. Spear phishing is a targeted hacking method that uses customized messages and information to trick specific individuals, rather than sending generic malicious emails to large groups.

According to Genians, the hackers used a range of impersonation tactics to lower victims' guard, including posing as police officers, defense officials, airline ticket issuers, and North Korea research groups.

In one message, the hackers claimed they had obtained North Korean nuclear power plant materials and were preparing a program to help researchers better understand the subject.

In another, a person claiming to be a police investigator said a hacking case had uncovered the recipient's email address on a suspicious server. (Asia Today translated by UPI)

Microsoft shared mitigations for a high-severity Exchange Server vulnerability exploited in attacks that allow threat actors to execute arbitrary code via cross-site scripting (XSS) while targeting Outlook on the web users.

Microsoft describes this security flaw (CVE-2026-42897) as a spoofing vulnerability affecting up-to-date Exchange Server 2016, Exchange Server 2019, and Exchange Server Subscription Edition (SE) software.

While patches aren't yet available to permanently fix the vulnerability, the company added that the Exchange Emergency Mitigation Service (EEMS) will provide automatic mitigation for Exchange Server 2016, 2019, and SE on-premises servers.

"An attacker could exploit this issue by sending a specially crafted email to a user. If the user opens the email in Outlook Web Access and certain interaction conditions are met, arbitrary JavaScript can be executed in the browser context," the Exchange Team said.

"Using EM Service is the best way for your organization to mitigate this vulnerability right away. If you have EM Service currently disabled, we recommend you enable it right away. Please note that EM Service will not be able to check for new mitigations if your server is running Exchange Server version older than March 2023."

Microsoft plans to release patches for Exchange SE RTM, Exchange 2016 CU23, and Exchange Server 2019 CU14 and CU15, but says that updates for Exchange 2016 and 2019 will only be available to customers enrolled in the Period 2 Exchange Server ESU program. (Sergiu Gatlan / Bleeping Computer)

Related: Microsoft, Neowin, Cyber Security News, Windows Report

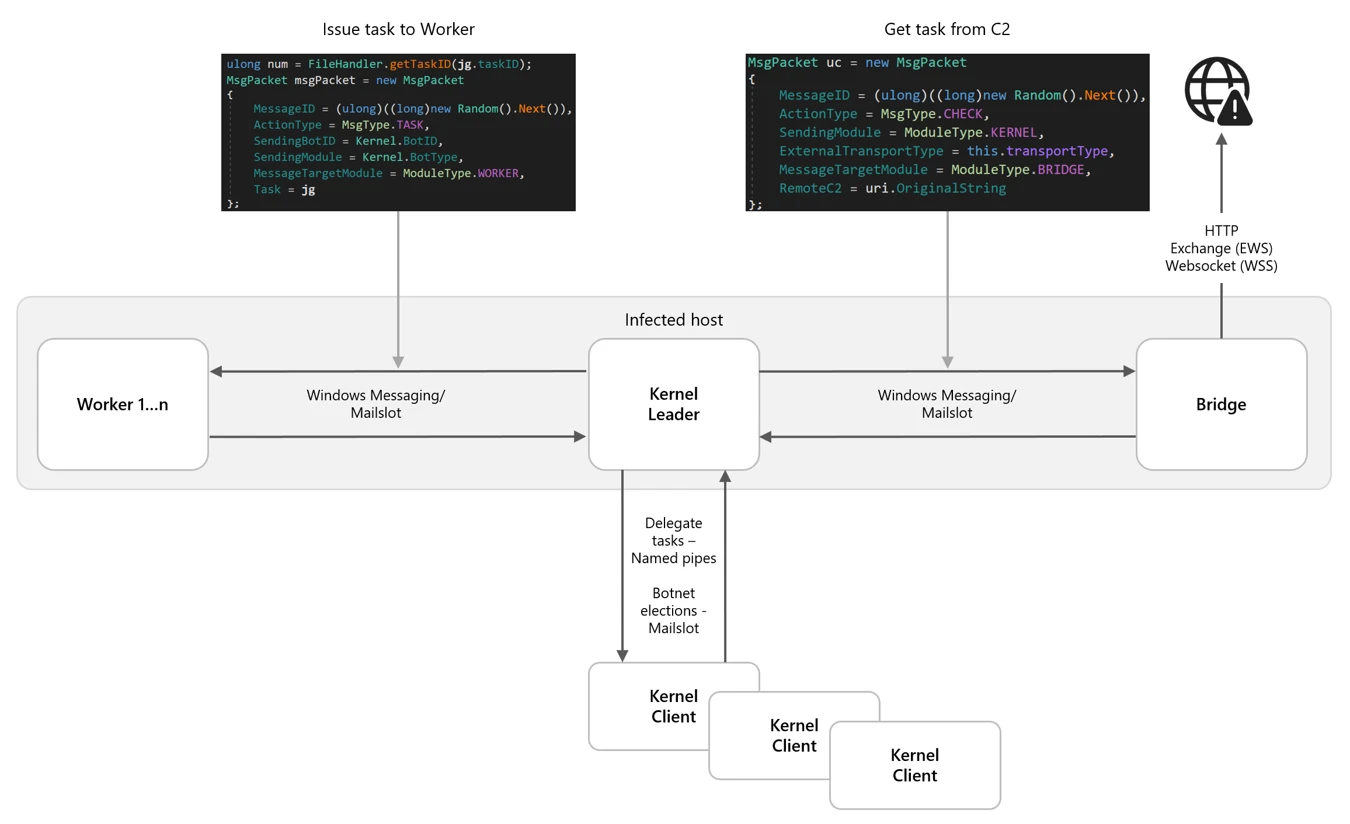

Microsoft threat intelligence reports that “Kazuar,” a long-running malware platform linked to the Russian state-sponsored threat group Secret Blizzard, has evolved into a stealthy peer-to-peer botnet designed for persistent intelligence collection.

The researchers say that Kazuar has transformed from a conventional backdoor into a modular ecosystem built around three separate components, Kernel, Bridge, and Worker modules, that collectively enable covert command-and-control (C2), distributed task execution, and resilient communications while minimizing detection opportunities.

Secret Blizzard, which the US Cybersecurity and Infrastructure Security Agency (CISA) attributes to Center 16 of Russia’s Federal Security Service (FSB), has historically targeted government, diplomatic, and defense-related organizations across Europe and Central Asia. (Bill Mann / Cyber Insider)

Related: Microsoft, Cyber Security News, Cyber Press

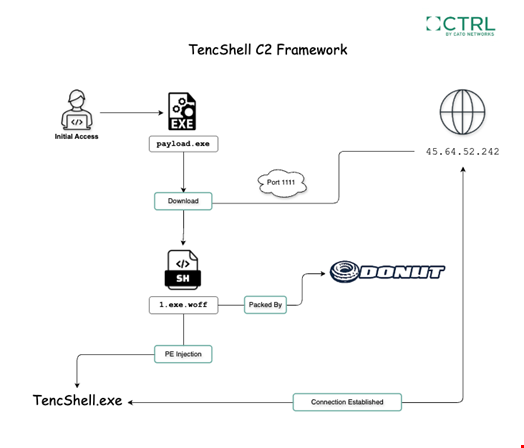

Researchers at Cato Networks’ Cyber Threats Research Lab (CTRL) report that they discovered an undocumented malware implant they call TencShell, suspected to be associated with a China-linked actor.

The attack chain used a first-stage dropper, Donut shellcode, a masqueraded .woff web-font resource, memory injection, and web-like command-and-control (C2) communication.

The operation aimed to infect the target with a customized Go-based implant derived from the open-source Rshell C2 framework.

Designed for cross-platform offensive security use, the original Rshell framework includes remote command execution, file and process management, terminal access, in-memory payload execution, multiple C2 transports, and a model context protocol (MCP) server, used notably for AI agent communications and operations.

Cato CTRL named the implant ‘TencShell’ because it combines shell-style remote-control capabilities with C2 communication that imitates Tencent-like web service paths. (Kevin Poireault / Infosecurity Magazine)

Related: Cato Networks, Cyber Press, Cyber Security News

On the first day of Pwn2Own Berlin 2026, security researchers collected $523,000 in cash awards after exploiting 24 unique zero-days.

Today's highlight was Orange Tsai's attempt, who was awarded $175,000 in rewards after chaining 4 logic bugs to achieve a sandbox escape on Microsoft Edge.

Windows 11 was also hacked three times by Angelboy and TwinkleStar03 (working with the DEVCORE Internship Program), Marcin Wiązowski, and Kentaro Kawane of GMO Cybersecurity, each earning $30,000 in cash rewards for demonstrating new privilege escalation zero-days.

Valentina Palmiotti (chompie) of IBM X-Force Offensive Research (XOR) also collected $20,000 after rooting Red Hat Linux for Workstations and another $50,000 for a zero-day in the NVIDIA Container Toolkit.

Other successful attempts include k3vg3n chaining 3 bugs to take down LiteLLM ($40,000), Satoki Tsuji and haehae exploiting NVIDIA Megatron Bridge zero-days ($20,000), Compass Security and maitai of Doyensec hacking OpenAI's Codex coding agent (each earning $40,000), haehae dropping a Chroma zero-day ($20,000), and STARLabs SG a LM Studio zero-day ($40,000). (Sergiu Gatlan / Bleeping Computer)

Related: Zero Day Initiative, Security Affairs, HackRead, CyberInsider

Jaguar Land Rover’s annual profits have slumped by more than 99% as it counted the cost of US tariffs and a cyber-attack that disrupted its factories for months.

Britain’s largest carmaker made only £14m in profit before tax and exceptional items in the year to March, down from £2.5bn the year before, according to financial results published on Thursday.

The manufacturer, which employs 33,000 people in the UK, suffered a series of blows as Donald Trump’s automotive industry tariffs caused turmoil in its important export market.

Jaguar Land Rover’s annual profits have slumped by more than 99% as it counted the cost of US tariffs and a cyber-attack that disrupted its factories for months.

Britain’s largest carmaker made only £14m in profit before tax and exceptional items in the year to March, down from £2.5bn the year before, according to financial results published on Thursday.

The manufacturer, which employs 33,000 people in the UK, suffered a series of blows as Donald Trump’s automotive industry tariffs caused turmoil in its important export market. (Jasper Jolly / The Guardian)

Related: ET Auto, AM Online, Bloomberg, The Economic Times, Telegraph

Director of National Intelligence Tulsi Gabbard said she has tapped two individuals to coordinate work across US spy agencies to monitor threats to the 2026 elections, according to multiple sources familiar with the matter.

Dave Mastro, an official at the National Intelligence Council, and James Cangialosi, the deputy chief of the National Counterintelligence and Security Center, will jointly perform the duties of the intelligence community’s election threats executive, these people said.

The position was created during President Donald Trump’s first term and has been responsible for convening an interagency group to evaluate and publicize evidence of foreign meddling.

Mastro and Cangialosi last week reiterated the spy community’s commitment to safeguarding the midterms during closed-door briefings for House and Senate Intelligence committee aides.

The pair also said the Office of the Director of National Intelligence (ODNI) would follow the existing notification framework for foreign interference in US elections, according to two people familiar with the discussions. (Martin Matishak / The Record)

Related: NextGov/FCW

Akamai Technologies is acquiring Israeli cybersecurity startup LayerX Security, which develops solutions for browser-based AI usage control and secure enterprise browser (SEB) technology, for approximately $205 million in cash.

LayerX’s technology extends security protections to the browser layer, where a growing share of enterprise activity now takes place, including employee use of generative AI tools, SaaS-based AI services, and autonomous AI agents. (Meir Orbach / Calcalist)

Related: Business Insider, Tech in Asia, DeviceSecurity.io, Pulse 2.0, Boston Business Journal, SecurityWeek, Globes, LayerX

Best Thing of the Day: Let This Be the Beginning

UK regulator Ofcom has taken action following the complaint of the Good Law Project over illegal posts on X, forcing the site formerly known as Twitter to remove at least 85% of reported terror and hate posts within 48 hours.

Worst Thing of the Day: DPRK AI-Engineered Crypto Thefts Are No Joke

Two crypto hacks in April that combined exceeded $600 million, widely believed to be the work of North Korea-linked groups. appear to have used artificial intelligence to select targets and design exploits, could presage an existential threat to the crypto industry.

Closing Thought