AI and the collapse of authenticity: Best infosec long reads 5/16/26

AI has industrialized identity theft, Copyright law can remove nonconsensual AI porn, Anthropic might not appreciate that 'Mythos' came from H.P. Lovecraft's horror tales, How to solve AI's falsehood problems, Computationally unknowable mathematical proofs can create a new cryptographic technique

Full access to Metacurity's curated infosec long reads is available to paid subscribers. Our goal is simple: make it financially viable to keep investing the time and expertise required to find, vet, and contextualize the most important security journalism each week. Free readers will still get highlights, but subscribers will get the complete, deeply curated set.

Please help support Metacurity achieve our goal by upgrading your subscription to gain full access to this issue and all content published on Metacurity, including the archives.

May 16: This week's long reads describe a world in which artificial intelligence is steadily eroding the boundary between what is authentic and what is synthetic. The Bloomberg piece shows how AI is making identity itself forgeable through cloned voices, fake documents, and synthetic personas. The MIT Technology Review article explores how deepfake pornography can overwrite reputations and lived experience with fabricated but convincing imagery. The HAUNT paper demonstrates that large language models will often reinforce falsehoods and even collaborate in invented memories when gently nudged by users, prioritizing conversational harmony over factual accuracy. The Quanta article suggests that even the mathematical foundations of digital security are becoming increasingly opaque and difficult for humans to understand intuitively, while the UnHerd essay captures the growing cultural unease surrounding systems that are persuasive, fluent, and only partially comprehensible.

What connects all of these stories is not simply “AI risk,” but a broader crisis of authenticity and trust. The internet created an information overload problem; AI is creating an authenticity problem, where simulation becomes easier to generate than verification. Increasingly, humans are interacting with systems optimized not for truth, but for fluency, engagement, personalization, and emotional resonance.

AI Is Making Digital Fraud Easier, Faster and Harder to Stop

Bloomberg's Jennah Haque argues that AI has industrialized identity theft by enabling criminals to automate phishing, generate convincing synthetic identities, clone voices and documents, and rapidly exploit breached personal data at a scale that is overwhelming traditional fraud defenses and exposing weaknesses in digital trust systems.

Today’s digital ecosystem creates the perfect storm for identity theft. AI makes every step — from stealing personal information held by companies to finding the right Social Security Number to steal to faking a driver’s license — easier and more sophisticated.

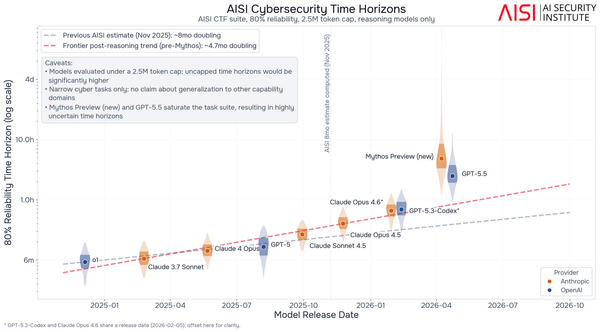

Some AI research labs are already acting with an abundance of caution due to fears of cyberattacks. Anthropic PBC is rolling out its new model, Mythos, to a select group of companies for testing against their own products and looking for vulnerabilities. Mythos is able to find loopholes in all sorts of operating systems, even exploiting Linux, the open-source code that powers most smart TVs, cars and other electronics, according to employees at Anthropic. OpenAI is also shopping its equivalent model around for companies to test.

When one researcher at Anthropic tested Mythos, they found it was able to pull off the equivalent of a digital bank robbery. The cautionary tales from Anthropic are prompting government officials to send up a flare to the financial sector.

The US saw the highest number of data compromises in 2025 since the nonprofit Identity Theft Resource Center (ITRC) began recording in 2005. AI is already a powerful force in cybercrimes: 40% of the 5,000 data breaches that consumer credit agency Experian serviced last year were powered by AI, said Michael Bruemmer, vice president of Consumer Protection. The firm predicts that this year agentic AI, deploying multiple autonomous agents to achieve sophisticated goals with limited human oversight, will be the number one cause of data breaches.

However, its powers go beyond just infiltration of institutional systems; agentic AI has also sharpened the urgency of identity theft and digital fraud cases: Subagents can scan the dark web for vulnerable Social Security numbers and personal information in seconds. Simultaneous attacks can occur by contacting multiple banks at a time impersonating a different identity, and agents can fill out complex government forms requesting loans. In February, a hacker used Anthropic’s Claude chatbot to attack various government agencies in Mexico, retrieving sensitive voter and tax information.

Cases of identity theft reported to the Federal Trade Commission have shot up nearly 20% year over year. US Head of Fraud at TransUnion Naureen Ali said globally more than $534 billion is lost to fraud annually.

Bruemmer outlined how AI has made these scams lethal: “AI does three things. It makes it faster for the hackers, attacks are more sophisticated and they’re better looking attacks. A phishing email from two or three years ago looks much different today, whether it’s with ChatGPT or Microsoft Copilot. They’re easily evading most people’s detection.”

I have gotten several data breach exposure letters over the years, ranging from random parking apps to companies as big as Meta, explaining that my information had been exposed. The letters have tended to disclose what was leaked: email, phone number, credit card information. The companies offer to pay for credit monitoring for six months, but nothing ever actually happens with that data, right?